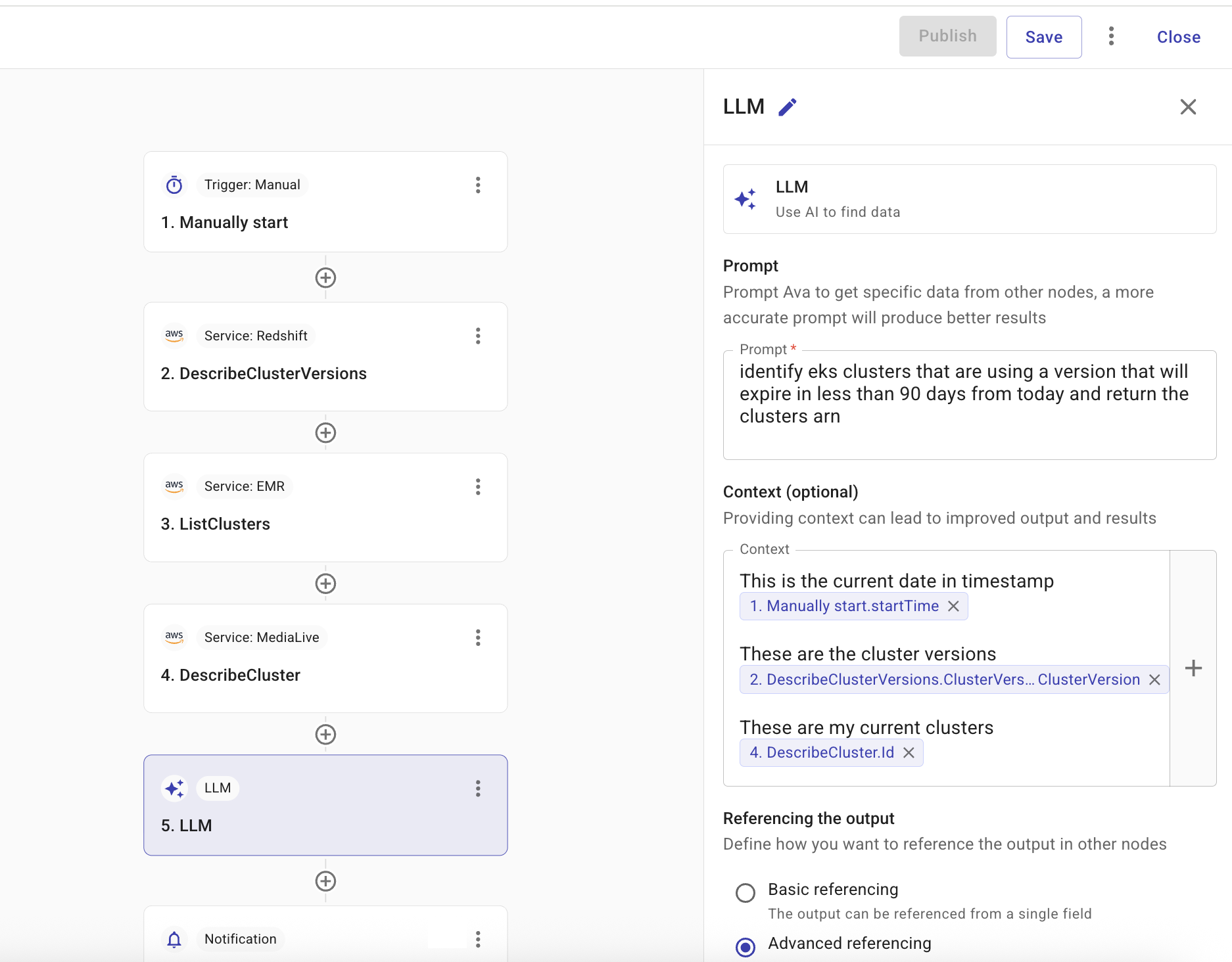

LLM node

The Large Language Model (LLM) node is designed for data processing, analysis, and formatting. It enables you to interpret, generate, and transform data using OpenAI and AWS Bedrock-powered LLM models. Instead of defining complex flow logic, you simply provide a prompt, such as Identify eks clusters that are using a version that will expire in less than 90 days from today and return the clusters arn. The LLM node can then make the resulting data available to other nodes in the flow for further actions.

Furthermore, you can reference specific fields from the previous nodes in the flow to ensure more accurate and consistent output. For how to reference values and field types, see Node parameters. The LLM node can then output the generated data to a single field or JSON object, making it available for the next step in the flow, such as sending a notification.

-

Prompt: Enter a prompt.

-

(Optional) Context: Add dynamic content to provide more context to the LLM model.

-

Referencing the output:

-

Basic referencing: The output is referenced as a single field. Use this for simple return values.

-

Advanced referencing: Define a JSON schema so that specific fields in your output can be individually referenced by downstream nodes. After you enter a prompt, you can use Suggest schema to generate a draft JSON Schema from the prompt, then refine it in the schema field. See Output schema for how to define the schema. Unlike typical standalone JSON Schema examples on json-schema.org, the CloudFlow output schema is not a full JSON Schema document. Do not include

$schema(for example thedraft/2020-12URL) or$idin that field. They are not supported and can prevent the schema from behaving as expected.

-

Test

Select Test to test the node.