Work with AI data

DoiT supports AI services provided by the following: AWS (Amazon Bedrock), Google Cloud (Vertex AI), Microsoft Azure (Azure Machine Learning), Databricks, Anthropic, and OpenAI.

After connecting your GenAI providers, you can start analyzing and monitoring your AI cost and usage.

AI data in Cloud Analytics

DoiT Cloud Analytics refreshes AI data on an hourly basis.

Limitations

-

Databricks: Currently, only the cost data is available. The token usage data will be supported soon.

-

OpenAI: OpenAI cost data reflects final charges while usage data is for monitoring activity. This means that the costs shown in reports may not always match your actual usage. If you need more details, check the OpenAI API Usage Dashboard.

- OpenAI audio input tokens: OpenAI Costs API aggregates the costs for cached and non-cached audio input tokens together. Because of this, GenAI Intelligence does not separate OpenAI audio SKUs into cached and non-cached – the cost for audio input tokens is always combined.

You can get AI data through dimensions and metrics. See below for the mapping between the DoiT and AI terminologies.

Basic metrics

| DoiT term | AI term | AI definition |

|---|---|---|

cost | cost | The total cost for a specific resource or usage. |

usage | usage | The usage for tokens. In addition, the usage metric includes consumption based on seconds, bytes, characters, and so on, depending on the specific service, model, or operation being tracked. |

Standard dimensions

The table below lists the standard dimensions available for all AI models that are integrated with the DoiT platform.

| DoiT term | Description |

|---|---|

| Billing Account | The unique identifier for a specific organization in your AI account. |

| Project ID/Account ID | The unique identifier for your AI provider workspace. |

| Service ID/Service Description/SKU Description | The high-level description of the type of AI product or capability used. For example, Completions API, Embeddings API, Claude haiku3.5 Usage - Input tokens, Web Search Usage. |

| SKU ID/SKU description | The specific, granular and billable unit within the AI service that you are using. For example, chatgpt-4o-latest, input, text-embedding-3-large, claude-3-5-haiku-20241022, input_tokens, claude-sonnet-4-20250514, output_tokens. |

| Usage | The primary unit of usage. For most providers the primary unit is token, but they also use other units depending on the AI service you are consuming. |

| Operation | A distinct, billable action performed by an AI service. For example, input, cached input, web_search, image. |

| Provider | The name of the AI provider, for example, OpenAI, Anthropic, Google Cloud, Amazon Web Services, Azure, or Databricks. |

GenAI labels

Below are the GenAI system labels that you can use in the DoiT platform. The GenAI system labels are grouped in the GenAI section.

| Label | Description | Provider |

|---|---|---|

| API Key ID | The unique identifier of the AI key. | Anthropic, OpenAI |

| API Key Name | The name of the AI API key. | Anthropic, OpenAI |

| Base Model | The identifier of an AI model offering. For example, gpt-4.1, claude-haiku-4.5. | Anthropic, OpenAI |

| Cached | Indicates whether the operation used cached tokens for cost optimization: true or false. | Anthropic, Azure, OpenAI |

| Consumption Model | The pricing model used for AI services: PAYG (Pay-As-You-Go) or Provisioned Throughput. | Azure, GCP |

| Context Window | A context window restriction applied on the used AI model. One of the following: 0-200k, 200k-1M | Anthropic |

| Feature | The type of AI capability or service feature being used. Vertex AI examples include Model Serving, Model Serving via Model Garden, Vertex Colab, Metadata storage. Microsoft Azure examples include Model Serving, Audio Generation, Embeddings. | Azure, GCP |

| GenAI Spend | The costs of any generative AI workloads irrespective of AI provider. | Anthropic, AWS, Azure, Databricks, GCP, OpenAI |

| Is Model Serving | Indicates whether the service is actively serving a model: true or false. Available for Vertex AI and Amazon Bedrock. | Azure, GCP |

| Media Format | For models that support multiple media types, the media format distinguishes whether the service was processing audio, images, or text. For example, audio. | Anthropic, AWS, OpenAI |

| Model | The identifier of an AI model offering. For example, gpt-4o-audio-preview. | Anthropic, AWS, Azure, Databricks, GCP, OpenAI |

| Model Family | A group of related AI models that share a common architecture and training methodology. For example, Claude, Gemini, GPT-5, Mistral. | Anthropic, AWS, Azure, Databricks, GCP, OpenAI |

| Model Version | The version of an AI model offering. For example, 2024-12-17. | Anthropic, OpenAI |

| Organization Name | The unique identifier for the AI organization. | Anthropic, AWS, Azure, OpenAI |

| Project | Same as Workspace ID in Anthropic. | Anthropic |

| Resolution | The resolution of an image in a single usage. Used in OpenAI models that support image processing. | OpenAI |

| Service Tier | Service tiers are used in Anthropic API to prioritize API availability for specific workflows. Can be Priority, Standard, and Batch. | Anthropic |

| Unit Category | The billing unit type for Azure AI services. Values include Commitment, Tokens, Batch, Time or Time Short, Period, and Request. | Azure |

| Usage Type | The direction of token flow in the AI model interaction: input (tokens sent to the model) or output (tokens generated by the model). | Anthropic, AWS, Azure, Databricks, GCP, OpenAI |

| User ID | The unique identifier of a specific user in your AI organization. | Anthropic, OpenAI |

| User Name | The name of a specific user in your AI organization. | Anthropic, OpenAI |

| Workspace | Workspace name in Anthropic console. | Anthropic |

| Workspace ID | ID of the workspace in Anthropic console. | Anthropic |

Example reports

The GenAI Intelligence dashboard contains several preset report widgets to help you jump start the GenAI spend and usage analysis. You can adjust the configurations of a preset report or create your own from scratch to dive deeper into GenAI data.

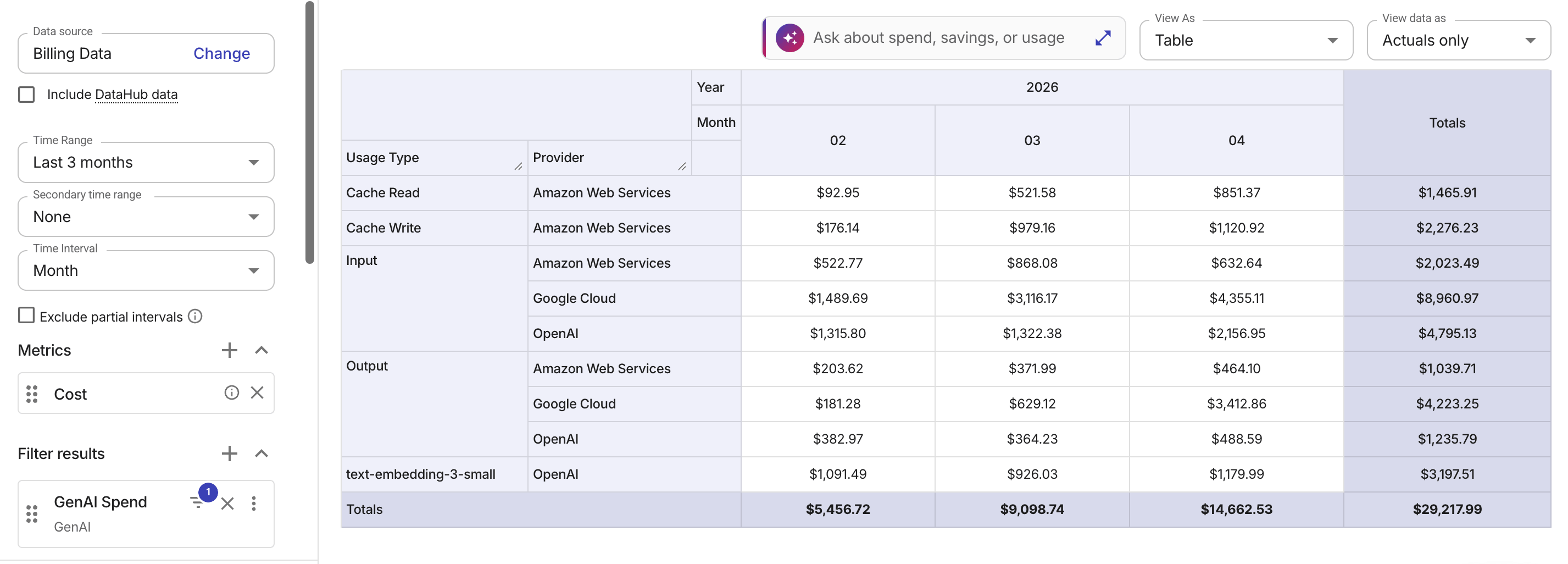

Cost by usage type

The example below groups costs by the normalized Usage Type dimension across AI providers.

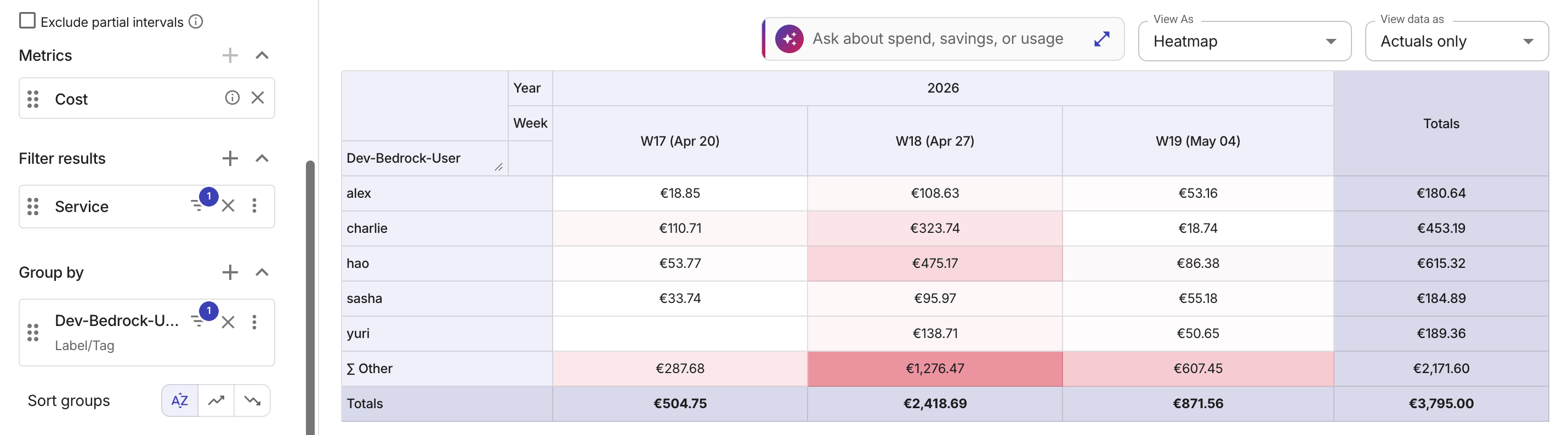

Amazon Bedrock cost by user

Standard AWS billing aggregates Bedrock costs by model and region. For granular cost tracking and allocating, AWS recommends Application Inference Profiles (see AWS re:Post article: How to Track and Limit Amazon Bedrock Usage by User).

To track Bedrock usage by user in the DoiT platform:

-

Create an AIP for each user via the Bedrock console or API.

-

Apply custom cost allocation tags and use the AIP ARN in your inference API calls instead of the base model ARN.

-

Activate tags in the AWS Billing and Cost Management console.

-

Activate AWS cost allocation tags in the DoiT platform. Depending on your account type, you may need to reactivate your tags.

After a tag appears in AWS billing data, it can take 6–8 hours before the tag shows up in Cloud Analytics reports; for a new tag, it can take up to 24 hours. See Limitations for latency and other restrictions.

The example below shows top five users by Amazon Bedrock costs in the last three weeks, grouped by a custom cost allocation tag.